Wrong Facts, perfect Systems

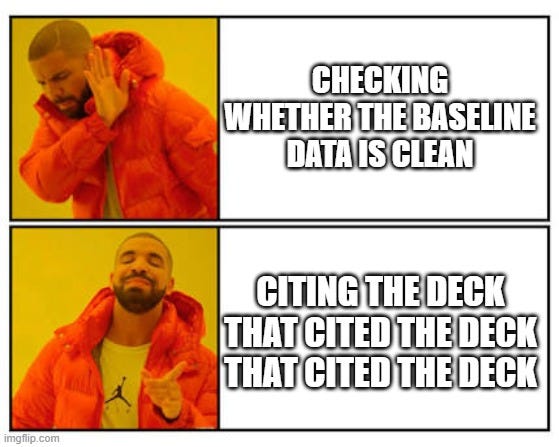

How I taught my AI to catch what my team keeps missing

This happened to me in a similar form about 3 times last week:

A PM presented a report showing a significant traffic spike in a specific period. The numbers were pulled correctly. The charts looked clean. The analysis was thorough.

I asked one question: did you check whether that date range includes the weeks we had a bot network scraping our sites?

They h…