Why SaaS got priced out

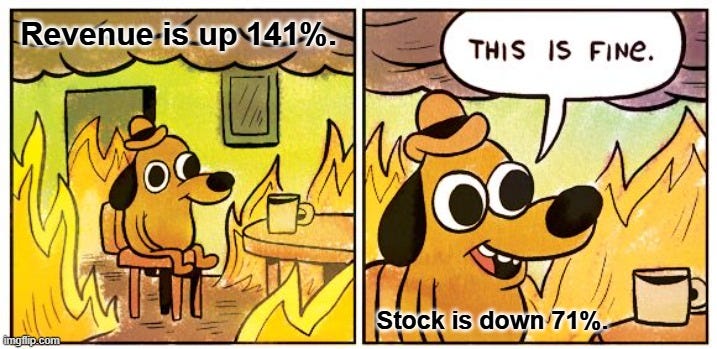

HubSpot is up 141%. The market doesn't care.

Classical B2B SaaS is not doing that well anymore. Not because the businesses are broken, but because the market has already decided the future isn’t there.

Broad software indices down ~25% from 12-month highs. The median public SaaS valuation multiple: 18.6× revenue in 2021, 5.1× at the end of 2025. That’s 73% compression, while most of the underlying b…